Field Notes is a series where I read academic papers and technical blog posts so you don’t have to. I try to strip away the jargon and explain what was actually said, why it matters, and my thoughts on it.

Kiran Gopinathan is a computer scientist specializing in AI, programming languages and proof maintainance. She recently wrote an article about a fundamental problem with multi-agentic software development which I’ll be reviewing here.

Review

Definition: Verification researchers study methods to ensure the accuracy, reliablility, and validity of data, models, or systems. They help in developing techniques to confirm that outputs align with intended specifications. Her perspective is uniquely positioned for the topic at hand.

Gopinathan points out the apathy among verification researchers towards managing agents. She claims that their approach amounts to “wait a few months and multi-agentic LLM systems will solve the coordination problem because of new advancements in ability”.

Definition: Distributed systems is a collection of independent computers/software processes working together (usually over a network) to achieve a common goal.

This coordination problem can be summarized as the struggle that current multi-agentic systems have building large-scale software on their own due to their inability to coordinate well. She notes how fundamentally these are distributed systems problems which have been known for decades with mathematical proofs regarding limits that no AGI can circumvent; so the “solution” of more intelligent/powerful models will not solve the problem.

What follows is her attempt to formalize the problem, which I’ll break down, and then connect it to some of these distributed systems problems.

Formal Model Explained

She starts with the satiric prompt:

Claude. Make me an app to do X. Make no mistakes.

Then proceeds with a definition:

$$ \Phi(P) := \lbrace \phi\ |\ \phi\ \text{is a program} \land \phi\ \text{is consistent with prompt } P \rbrace $$

- $P$ is defined as a prompt for the model.

- $\Phi(P)$ is defined as the set of software consistent with the prompt

- The stuff in the braces means that

- $\phi$ (small phi), is a program

- $\land$ means logical and

- The program is consistent with the prompt

Note that $\Phi \neq \phi$ here. $\Phi(P)$ represents the set defined above. Whereas $\phi$ is a program (an element), within that set. So $\phi_2$ is a (one of many) program(s) that’s consistent with the prompt.

The beauty of this definition is that because LLMs use natural language (as opposed to formal languages which are more precise, like a programming language), ambiguity is always a factor, and since LLMs are non-deterministic (they’re probabilistic), it can select from a very large set of the set of software $\Phi(P)$ that still satisfies the given prompt. One might say, “okay so narrow the set by being more specific,” but in the end, you’re giving it a natural, not a formal language. So, it’ll always be a problem unless you become so specific you’re speaking formally again.

Next she defines the following,

$$ C(\phi_1, \cdots, \phi_n) := \exists \phi \in \Phi(P), \forall i, \phi_i \text{ refines } \phi $$

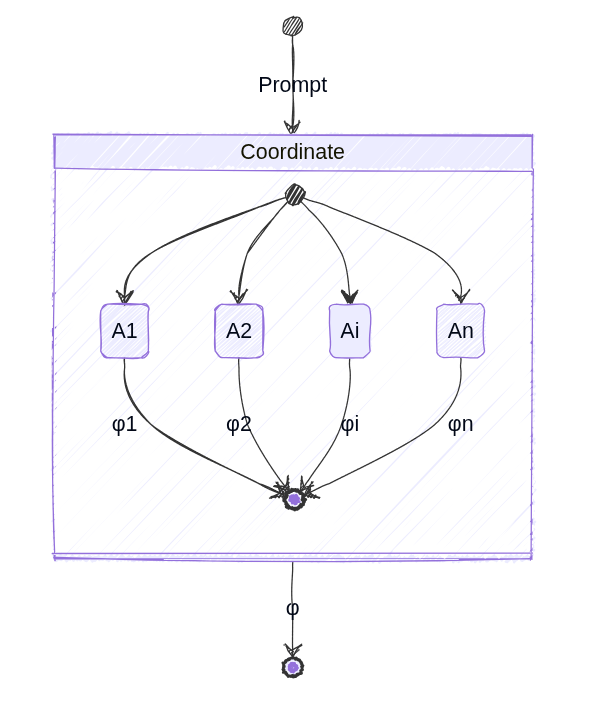

This definition is a bit ambiguous since $\phi$ is overloaded here. But she explains that a bunch of agents $A_1, \cdots, A_n$, build all of the software components (the subscripted $\phi_i$), so say $A_1$ builds software component $\phi_1$, and $A_2$ builds component $\phi_2$, etc. All it’s saying is there exists a program that’s consistent with the prompt, such that every software component built by different agents refine the general program that’s consistent with the prompt. In other words, the subcomponents/programs built by subagents will be put together such that they’ll make the overall intended program given by the prompt realized.

Definition: A distributed consensus problem is a classic problem in distributed systems where multiple computers (agents in our case) need to agree on a single decision/value. The trick is that they’re all working independently and communication isn’t perfect. Consensus is further complicated because some might fail or have incomplete information.

What this means is that a prompt comes in, each agent $A_i$ independently receives the prompt, independently interprets its meaning from the whole set of meanings it could have, creates the subcomponent, then assembles it to the final program by stitching all subcomponents together for a cohesive whole. Well, that’s the goal anyway.

This is a consensus problem because $A_1$ might produce component $\phi_1$ that needs to interface/interact with $\phi_2$ produced by $A_2$ and so on. In other words, the choice of one agent, constrains the choices of others (there’s no point in making duplicate API services for example), and also those choices will determine the integration and other design/architectural decisions of the other components made by other agents that require it. And all of this is done in parallel.

She anticipates some rebuttals which are worth reading about, the most noteworthy being that there will always be more than 1 program that meets the specification because we’re dealing with a natural language specification, and that a single supervisor directing the process doesn’t solve the problem, something I’ve admittedly been working on privately, so that sucks lol.

Impossibility Results and Byzantine Generals

She starts off by presenting the FLP Theorem, which stems from a classic paper in distributed systems proving the impossibility of distributed consensus when a faulty process exists:

FLP Theorem (Fischer et al., 1985): In any distributed system with arbitrary delays on messages and the possibility of at least 1 node crash failure, no deterministic protocol can ensure all non-faulty nodes reach consensus in bounded time.

According to Gopinathan, this result applies to multi-agentic systems and the implications are that there will never be a guarantee that they deliver the user’s intended application, and that they will reach a coherent consensus on the final delivery at the same time.

She does mention another paper (Chandra and Toueg) that describes one way to yield consensus is a failure detector that checks the “heartbeat” of every agent.

There’s a famous problem she draws upon called The Byzantine Generals Problem, that uses the analogy of Byzantine Generals surrounding a city and have to coordinate their actions through a messenger, but some generals in that network may be traitors and deliberatly passing along malicious or arbitrary messages. This sort of Byzantine model gives a worst case scenario for reliability/correctness of such systems given malicious or arbitrary behavior of the participants (akin to Big-O for worst-case algorithm performance, but with adversarial/malicious/arbitrary “intent”). Lamport et. al. showed that this problem is only solvable if more than two-thirds of the generals are loyal, i.e. if you have only two generals, you’re screwed. To quote the original Lamport paper,

[…] we can show that no solution with fewer than $3m + 1$ generals can cope with $m$ traitors.

I think she’s on to something here with this analogy, but doesn’t go far enough. She admits that the agents might not be intentionally trying to disrupt the process, but says it’s more about them misrepresenting/misunderstanding the prompt. I think we have to factor in a new kind of Byzantine General: the incompetent general with good/otherwise neutral intentions, or a Dunning-Kruger general. The malicious case still exists for the case of growing exploits of agentic systems among clever hackers, but even if we’re dealing with an uncompromised system of agents, they still hallucinate (make stuff up), which is akin to a general that’s too proud to admit they’re at the limits of their capability with a given task, so they just do something. We’ve all had managers like this.

I’ll quote Gopinathan directly for the implications of this analogy,

The key observation here is that Lamport’s result is a hard bound on the maximum number of misinterpretations we can tolerate for software development to actually be possible. This isn’t something that will be solved by smarter agents or better LLMs.

One might think, “okay, then we simply increase the number of agents to dilute the proportion of those that might be hallucinating or outright malicious due to prompt injection or another clever exploit”. But that only compounds the coordination problem, and we’re back at FLP by increasing the probability that at least one will fail.

She concludes by recognizing that people are currently delivering vibe coded multi-agentic software. But, as with everything in computer science, how does that scale? They’re using ad hoc solutions for the coordination problem with zero gaurantees. Which is risky business when the output is handling financial data, critical infrastructure or chemotherapy delivery.

Conclusion

The standard practice in LLMs among its producers are to throw more data and compute power at it, and so far, that’s worked. But we’ve been here before. With CPUs, we were able to scale processor speeds up until reliability broke down. Then we had to adopt multi-processor techniques, which introduced a host of other fundamental problems and limits. Network topologies have a similar history, as have other areas in computing, and I suspect this is yet another example of this fundamental scaling limit manifesting that requires us to open up the hood and understand the boundaries. The consequences aren’t dire when it’s a personal exercise app, but reliability is a must if we want to hand over the keys to these systems at scale and to more critical systems. Failing to do so is an invitation to disaster.